A voice keyboard that keeps your voice on your phone.

Install Yaps on Android for offline dictation, a familiar full-size keyboard, and no screen capture. Scan the QR on desktop, or tap the Play badge on mobile.

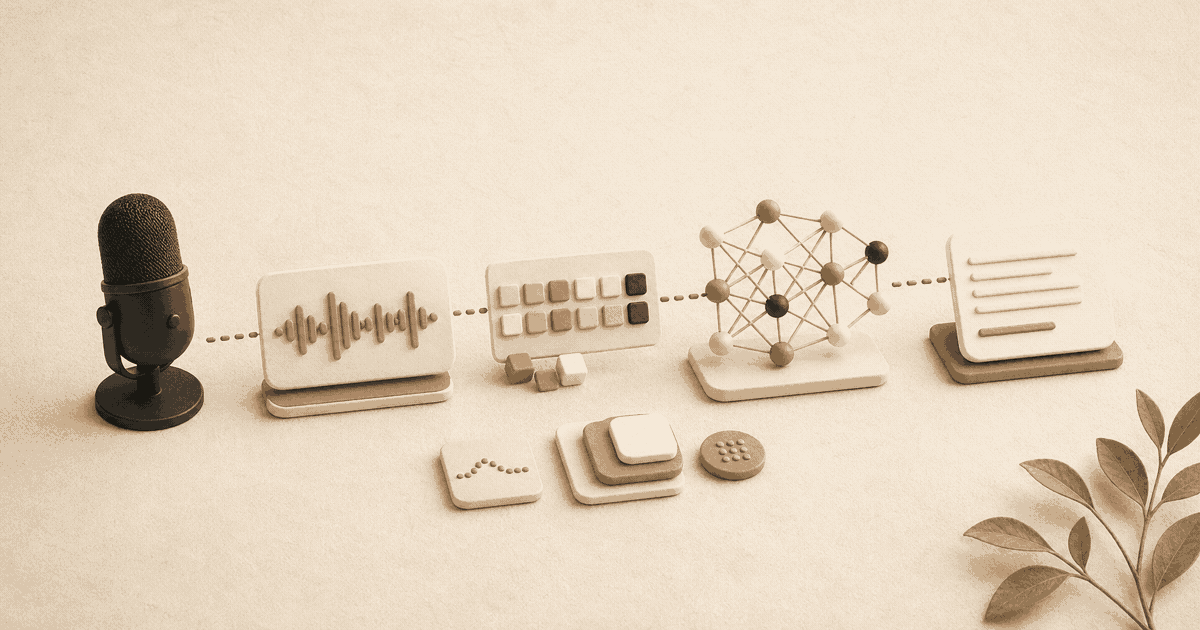

Modern speech recognition isn't magic — it is a carefully orchestrated pipeline of acoustic processing, neural networks, and language modeling. Here is the full anatomy of an ASR system, from raw audio to text, and how transformer models displaced classical HMM approaches.

When you hold down Fn and start speaking to Yaps, your words appear on screen almost instantly - properly capitalized, punctuated, and formatted. It feels simple. Behind the scenes, it is anything but.

Modern speech recognition is a pipeline of sophisticated technologies working in concert, each solving a piece of the puzzle that turns acoustic vibrations into meaningful text. But there is a deeper question that most technical guides overlook: where that pipeline runs matters just as much as how it works.

In this comprehensive guide, we will walk through the entire speech recognition pipeline, explain the engineering decisions that make real-time dictation feel effortless, and examine why on-device processing represents a fundamental shift in how speech-to-text should work - especially when privacy, reliability, and latency are non-negotiable.

Every modern ASR system — on your Mac, your phone, or a cloud server — is built from the same five components:

Classical systems wired these together with hand-tuned rules. Modern end-to-end models collapse stages 2–4 into a single neural network trained on raw audio and text. Stages 1 and 5 still earn their keep as pre- and post-processing scaffolding.

At a high level, the five components above collapse into four practical stages most engineers think about when designing or debugging an ASR pipeline:

Each stage presents distinct engineering challenges. The choices you make at every level - what models to use, where to run them, how to optimize them - determine whether the result feels magical or frustrating. Let's dive into each stage in detail.

Before any AI touches your speech, the raw audio needs to be cleaned up. Your microphone captures everything - your voice, keyboard clicks, the hum of your fan, that construction outside your window. The first job is isolating what matters.

Yaps uses a real-time noise suppression model that runs locally on your device. This neural network has been trained on thousands of hours of noisy audio to distinguish speech from background noise. It operates on 20-millisecond audio frames, meaning it processes and cleans your audio 50 times per second.

The key challenge is doing this without introducing artifacts - the hollow, underwater quality you hear on bad conference calls. Our model uses a technique called spectral masking, where it learns which frequency bands contain speech and which contain noise, then selectively attenuates the noise while preserving the natural quality of your voice.

Spectral masking works by analyzing the frequency spectrum of each audio frame and generating a mask - a set of multipliers between 0 and 1 for each frequency bin. Frequency bins dominated by noise get multiplied by values close to zero, effectively suppressing them. Bins dominated by speech pass through largely untouched. The result is clean, natural-sounding audio that retains the speaker's vocal characteristics without the robotic quality of simpler noise-gating approaches.

Because this processing happens entirely on your Mac, there is zero network latency added to the pipeline. Cloud-based alternatives must upload raw audio, process it on remote servers, and return the cleaned signal - adding anywhere from 50 to 300 milliseconds of delay depending on connection quality.

Not everything you say should be transcribed. Coughs, throat-clearing, background conversations - the system needs to know when you are actually dictating. Voice Activity Detection (VAD) is a lightweight classifier that runs continuously, flagging audio frames that contain intentional speech.

Our VAD model is particularly tuned for dictation patterns. It understands that a pause of two seconds mid-sentence is thinking time, not the end of an utterance. This prevents the fragmentation you see in many speech-to-text tools, where pauses cause the system to submit partial, broken transcriptions.

This is a subtle but critical differentiator. Many cloud-based transcription services use generic VAD models optimized for conversation (short turn-taking exchanges), not for dictation (long-form, thoughtful monologues with natural pauses). The result is that cloud tools often fragment your speech into disconnected chunks, losing the thread of your thought. Yaps treats dictation as its own interaction paradigm and tunes accordingly.

This is where the heavy lifting happens. The acoustic model takes preprocessed audio and produces a sequence of probable linguistic units - phonemes, word pieces, or characters, depending on the architecture.

Raw audio waveforms contain far more information than a speech model needs. The first step is converting the waveform into a more compact representation. Yaps uses log-mel spectrograms - a representation that mimics how the human ear perceives sound.

A spectrogram breaks audio into frequency bands over time. The "mel" scale warps these frequencies to match human perception (we are more sensitive to differences in low frequencies than high). The "log" transformation compresses the dynamic range, similar to how our ears perceive loudness logarithmically.

The result is a 2D image-like representation of your speech, where the x-axis is time, the y-axis is frequency, and the intensity represents energy. This is what the neural network actually processes - and it is remarkably efficient. A full minute of high-fidelity audio, which might be 10 megabytes as a raw waveform, compresses down to roughly 200 kilobytes as a mel spectrogram while retaining all the information needed for accurate transcription.

For thirty years, speech recognition ran on Hidden Markov Models (HMMs) layered over hand-crafted phoneme dictionaries and n-gram language models. Dragon NaturallySpeaking, Siri's early years, and most enterprise dictation from the 1990s through the late 2010s used variants of this stack. It worked, but it demanded heavy engineering, per-language pronunciation dictionaries, and manual feature work.

The transformer era — kicked off by Listen-Attend-Spell (2015), then carried by Conformer (2020), Whisper (2022), and the RNN-Transducer family Parakeet uses — collapsed the stack into a single neural network trained end-to-end on raw audio and text.

| Dimension | Classical (HMM + GMM/DNN) | Modern (Transformer / Conformer) |

|---|---|---|

| Pipeline | Separate acoustic + pronunciation + language models | Single end-to-end network |

| Training data | Tens of hours, heavily annotated | Hundreds of thousands of hours, weakly labelled |

| Accent robustness | Brittle; per-accent tuning needed | Strong out of the box |

| Pronunciation dictionary | Required per language | Learned implicitly |

| Latency | Low, predictable | Higher, mitigated by streaming + speculative decoding |

| Edge deployment | Small footprint | Larger but quantisable to fit Apple Silicon |

| Examples | Kaldi, Dragon (legacy), Sphinx, SAPI | Whisper, Parakeet, Conformer-RNN-T, Apple on-device ASR |

The classical stack still has a place in narrow domains — voice dialling, command-and-control on weak hardware, certain telephony pipelines. For open-vocabulary dictation in 2026, transformers have effectively won. The remaining question is where you run it — which is where the cloud-versus-on-device debate lives.

The landscape of speech recognition changed dramatically with the release of OpenAI Whisper, an open-source speech recognition model trained on 680,000 hours of multilingual audio. Whisper demonstrated that a single, large transformer model could achieve near-human accuracy across dozens of languages without the complex, multi-component pipelines that dominated earlier approaches.

Whisper uses an encoder-decoder transformer architecture - the same family of models behind large language models like GPT, but adapted for audio input:

The encoder processes the mel spectrogram through multiple layers of self-attention, building increasingly abstract representations of the audio. Early layers capture low-level acoustic features (vowel formants, consonant bursts), while deeper layers capture higher-level patterns (syllable structure, speaking rhythm, accent characteristics).

The decoder generates text tokens auto-regressively - one at a time, each conditioned on the audio encoding and all previously generated tokens. This is what gives the model its ability to handle ambiguity. When it encounters a sound that could be "their," "there," or "they're," the decoder uses context from the rest of the utterance to choose correctly.

What made Whisper transformative was not just its architecture but its training data. By training on hundreds of thousands of hours of diverse audio - different accents, recording conditions, background noise levels, and speaking styles - the model developed remarkable robustness that previous systems lacked.

Yes. This is one of the most significant developments in speech recognition over the past two years. Projects like whisper.cpp (a C/C++ port of Whisper by Georgi Gerganov) and Apple's WhisperKit have made it possible to run Whisper-class models entirely on-device, with no internet connection and no data leaving your machine.

Yaps builds on this foundation. Our acoustic model is derived from the Whisper architecture but has been extensively optimized for real-time, on-device dictation on macOS. The key optimizations include:

The result is a model that runs locally with accuracy matching cloud services from major providers - something that would have been impossible as recently as 2023.

Many speech recognition systems wait until you stop speaking to process the entire utterance at once. This creates a noticeable delay between speaking and seeing text. Yaps uses streaming recognition: the model begins producing text while you are still speaking.

This is technically challenging because early in an utterance, the model has limited context. It might initially transcribe "I want to book a" as the beginning of a hotel reservation before hearing "flight to Tokyo." Our model handles this with speculative decoding - it produces a best guess in real-time but maintains the ability to revise earlier tokens as more audio arrives. On screen, you see text appearing smoothly, with occasional subtle corrections as the model refines its understanding.

Speculative decoding works by running two passes simultaneously: a fast, lightweight pass that generates initial predictions with low latency, and a more thorough pass that verifies and corrects those predictions as more context becomes available. The user sees the fast pass first, with corrections applied so smoothly that the process feels like continuous, fluid transcription rather than a sequence of corrections.

Streaming recognition is what separates dictation tools that feel instant from those that feel sluggish. The dual-pass approach - fast speculative output followed by silent correction - is the same technique used by modern LLMs for faster token generation. Applied to speech, it means you never wait for the model to "catch up" to your voice.

The acoustic model gives us a rough transcription. The language model refines it, using its knowledge of grammar, vocabulary, and common phrases to correct errors and resolve ambiguity.

Consider the phrase "recognize speech." Acoustically, it is almost identical to "wreck a nice beach." Without understanding context, a purely acoustic model would struggle to distinguish them. The language model knows that "recognize speech" is a coherent English phrase while "wreck a nice beach" is grammatically unusual, and weights its output accordingly.

Yaps uses a custom language model that has been fine-tuned for dictation-style speech. This means it understands patterns like:

Over time, Yaps learns your vocabulary. If you frequently use specialized terms - legal jargon, medical terminology, company-specific acronyms - the language model adapts. This happens entirely on-device: your personal language model is stored locally and never uploaded.

The technical mechanism is a small, personalized n-gram model that sits alongside the main language model. When you use a word the main model does not recognize well, the personalized model boosts its probability in future transcriptions. It is a simple technique, but it makes a dramatic difference for specialized vocabularies.

For example, a radiologist who regularly dictates reports with terms like "pneumomediastinum" or "hepatosplenomegaly" will find that Yaps quickly learns these terms and transcribes them accurately without manual correction. This adaptation is stored as a small local file - typically under 5 megabytes - that captures your unique vocabulary patterns without storing any of your actual dictated content.

Raw transcription - even good raw transcription - looks nothing like polished text. Post-processing transforms stream-of-consciousness speech into properly formatted written language.

Yaps does not require you to say "period" or "comma." Our punctuation model analyzes the transcript and inserts punctuation based on prosodic cues (pauses, intonation) and syntactic structure. It handles:

Sentence-initial capitalization is straightforward, but proper noun detection is more nuanced. The model uses a named entity recognizer to identify people, places, organizations, and products, capitalizing them correctly. It also handles common patterns like formatting numbers ("three hundred" becomes "300" or "three hundred" depending on context) and common abbreviations.

Spoken language is full of disfluencies - "um," "uh," false starts, and repeated words. Yaps automatically removes these, producing clean text that reads as if it were typed. This is one of the most noticeable differences between Yaps and basic transcription tools, which faithfully reproduce every filler word.

Disfluency removal uses a sequence-labeling model that classifies each token as either "keep" or "remove." It is trained on parallel corpora of spoken and written language, so it understands not just which words to remove but how to restructure the remaining text so it flows naturally.

The technical pipeline described above can run in two fundamentally different environments: on remote cloud servers or locally on your own device. This is not just an architectural choice - it has profound implications for privacy, latency, reliability, and who ultimately controls your data. For a practical look at how these architectural differences play out across specific products, see our comparison of the best dictation apps for Mac.

Most popular speech-to-text tools - including Wispr Flow, Granola AI, Otter.ai, and many others - route your audio through cloud servers. The process typically works like this:

This approach has one advantage: cloud providers can run larger models on more powerful hardware. But this advantage has been shrinking rapidly as on-device capabilities have improved.

Cloud-based speech recognition adds network round-trip time to every transcription. On a fast connection, this might be 50-100 milliseconds. On a slow connection, hotel WiFi, or a mobile hotspot, it can balloon to 300-500 milliseconds or more. And those numbers assume the servers are not under heavy load.

On-device processing eliminates this entirely. Yaps processes audio with a consistent latency of under 20 milliseconds regardless of network conditions, because the neural network is running directly on your Mac's Apple Silicon Neural Engine. The result is text that appears to flow from your speech in real time, with no perceptible delay.

50-500ms latency depending on connection quality. Fails completely offline. Audio uploaded to third-party servers. Accuracy varies under server load. Your voice biometrics stored remotely.

Under 20ms latency, every time. Works fully offline, anywhere. Audio never leaves your Mac. Consistent performance regardless of load. Zero biometric data exposure.

This is one of the most important practical questions for anyone who relies on voice-to-text in their daily workflow. The answer depends entirely on architecture.

Cloud-dependent tools cannot work offline. If you are on an airplane, in a rural area without cell service, working in a secure facility that restricts internet access, or simply experiencing an ISP outage, cloud-based speech recognition stops completely. Your workflow grinds to a halt.

On-device tools work everywhere. Yaps runs its entire offline dictation pipeline locally on your Mac. There is no internet dependency whatsoever. You can dictate on a transatlantic flight, in a remote cabin, or in a government SCIF with the same accuracy and speed as you would at your desk with gigabit fiber. The models, the inference engine, the language model, the post-processor - everything runs on your hardware.

This is not a degraded "offline mode" with reduced accuracy. The same models run whether you are connected or not. The experience is identical.

This reliability matters especially for users who depend on voice input as a primary input method - not by preference, but by necessity. For professionals managing RSI, carpal tunnel syndrome, or other repetitive strain conditions, a voice tool that stops working when WiFi drops is not just inconvenient, it is a workflow failure. Our guide on using voice input for RSI and repetitive strain injuries explores how on-device reliability factors into accessibility-first voice workflows.

Speech is among the most sensitive data a person can produce. Your voice carries biometric identifiers unique to you. The content of your speech - emails, medical notes, legal briefs, personal journals, business strategies - is often confidential or privileged. When this data is sent to cloud servers, you are trusting third parties with some of the most private information in your digital life. We explore the full range of what voice data reveals - from emotional state to health indicators - in our article on why your voice data is more sensitive than you think.

The track record of cloud providers with voice data should give anyone pause:

Data breach exposure is massive and growing. In 2024 alone, 276 million healthcare records were breached in the United States. Speech data processed in the cloud - including medical dictations, therapy session notes, and patient records - is part of this attack surface.

Companies have been caught recording and retaining private conversations. Google paid a $68 million settlement for recording private conversations through its voice assistant without adequate user consent. Amazon, Apple, and other tech companies have all faced scrutiny for having human contractors review voice recordings from their assistants.

Biometric voice data is being harvested without consent. Fireflies.AI, a popular meeting transcription tool, was sued for harvesting biometric voice data - the unique vocal characteristics that identify you as an individual - without user consent. Voice biometrics, once captured, cannot be changed like a password.

Cloud transcription tools route data through third-party AI providers. Wispr Flow, a popular macOS dictation tool, sends all audio to OpenAI and Meta servers for processing and captures screenshots of your screen for context. Granola AI routes meeting transcriptions through OpenAI and Anthropic. When you use these tools, your data passes through not just the tool provider but also their upstream AI vendors - multiplying the number of parties with access to your private speech.

Healthcare tools built on cloud infrastructure are particularly concerning. Heidi Health, an AI medical scribe used in clinical settings, is 100% cloud-dependent on Google Cloud infrastructure. It has faced reports of hallucination issues, including fabricating patient information in clinical notes. When the stakes are a patient's medical record, cloud dependency introduces both privacy and accuracy risks.

User concern is widespread. According to industry research, 40% of voice assistant users worry about "who is listening" to their voice data. This is not paranoia - it is a rational response to a documented history of misuse.

Yaps takes a fundamentally different approach: 100% on-device processing with zero data transmission. Here is what that means in practice:

This is not just a feature - it is an architectural guarantee. There is no server to breach, no API to intercept, no third party to subpoena. Your speech data exists only on your Mac, processed by hardware you physically control.

Running sophisticated neural networks locally would have been impractical on consumer hardware even five years ago. What changed was Apple Silicon - specifically, the Neural Engine.

Every Apple Silicon chip (M1 through M4 and beyond) includes a dedicated Neural Engine - a hardware accelerator specifically designed for machine learning inference. The Neural Engine is a distinct processing unit, separate from the CPU and GPU, optimized for the matrix multiplication and tensor operations that neural networks rely on.

The M-series Neural Engine can perform up to 38 trillion operations per second (TOPS) on the M4 chip. To put that in perspective, that is more than enough computational power to run multiple speech recognition models simultaneously in real time while leaving the CPU and GPU entirely free for your other applications.

Apple's Core ML framework is the bridge between trained machine learning models and Apple Silicon hardware. When Yaps compiles its speech recognition models to Core ML format, several optimizations happen automatically:

The result is that Yaps can run its entire speech recognition pipeline - noise suppression, acoustic modeling, language modeling, and post-processing - using under 200MB of memory and negligible CPU usage. You can dictate while running heavy applications like Xcode, Final Cut Pro, or large language models without any performance impact on either your dictation or your other work.

Our acoustic model was originally trained at 32-bit floating-point precision - the standard for training deep neural networks. For on-device inference, we quantize it to 4-bit precision using a technique called GPTQ (Generative Pre-Trained Transformer Quantization).

Quantization replaces high-precision floating-point numbers with lower-precision integers. At 4-bit precision, each model parameter occupies one-eighth the memory of its 32-bit counterpart. A model that would require 1.6 gigabytes at full precision fits in approximately 200 megabytes after quantization.

The key insight is that neural networks are remarkably tolerant of reduced precision during inference (as opposed to training). The accuracy loss from 4-bit quantization is typically less than 1% on standard speech recognition benchmarks - imperceptible in real-world use. This is what makes it possible to run Whisper-class models on a MacBook Air with 8GB of RAM without breaking a sweat.

GPTQ achieves this by analyzing the weight distributions of each layer and finding the quantization scheme that minimizes the overall error. Unlike naive quantization, which can degrade specific model capabilities, GPTQ preserves the most important weight relationships while aggressively compressing the rest.

This is the question that matters most, and the answer has changed dramatically in recent years.

Three years ago, there was a meaningful accuracy gap between cloud and local speech recognition. Cloud providers could run larger models on more powerful hardware, and the difference was noticeable - especially for accented speech, noisy environments, and specialized vocabulary.

That gap has effectively closed. Several converging trends made this possible:

In our internal benchmarks, Yaps achieves a word error rate (WER) within 0.5% of leading cloud providers on standard English dictation tasks. On specialized vocabulary that benefits from personalization (legal, medical, technical), Yaps often outperforms cloud alternatives because the personalized n-gram model provides a boost that generic cloud models cannot match.

Cloud models still hold advantages in specific scenarios: heavily accented speech in rare language pairs, extremely noisy environments with overlapping speakers, and real-time translation between languages. These are areas where sheer model size and training data volume still matter.

For the primary use case of dictating text on your Mac - emails, documents, notes, code comments, messages - on-device models have reached parity. The remaining gaps are narrow and narrowing further with each model generation.

Yaps is purpose-built for a specific use case: real-time dictation on macOS with absolute privacy. Every architectural decision flows from that focus:

For a full walkthrough of these features and how they work together, see our introduction to Yaps.

Speech recognition has improved more in the last three years than in the preceding thirty. But we are still in the early innings. The trends shaping the next generation of this technology include:

The trajectory is clear: the capabilities that once required cloud infrastructure are steadily migrating to local hardware. Within a few years, there will be no accuracy-based reason to send voice data to remote servers. The only question is whether users will demand that their tools make this shift.

Install Yaps on Android for offline dictation, a familiar full-size keyboard, and no screen capture. Scan the QR on desktop, or tap the Play badge on mobile.

Five components: (1) audio capture and preprocessing (noise suppression, voice activity detection); (2) feature extraction (log-mel spectrograms); (3) an acoustic model — typically a transformer or conformer network — that maps audio to linguistic units; (4) a language model that resolves ambiguity using grammar and word-sequence probability; (5) a decoder and post-processor that picks the most likely transcription and adds punctuation, capitalisation, and formatting. Classical HMM systems kept these separate; modern end-to-end models like Whisper collapse stages 2–4 into a single network.

Speech recognition converts acoustic vibrations into text by predicting which sequence of words most likely produced the audio. The pipeline is: digitise the sound, extract features (a frequency-vs-time spectrogram), run a trained neural model on those features, and post-process the output into readable text. The technology dates to the 1950s, but the modern transformer-era arrived between 2020 and 2024. The decisive shift is that ASR models are now small enough to run entirely on a phone or laptop — removing the need to send audio to the cloud at all.

Speech recognition works by converting audio into text through a multi-stage pipeline. First, raw audio is captured and cleaned through noise suppression and voice activity detection. Then, an acoustic model (typically a transformer neural network) converts the audio signal into linguistic features by analyzing a spectrogram representation. A language model refines the output by resolving ambiguities and applying contextual understanding. Finally, post-processing adds punctuation, capitalization, and formatting. Modern systems like Yaps run this entire pipeline locally on your Mac using Apple Silicon's Neural Engine.

OpenAI Whisper is an open-source speech recognition model trained on 680,000 hours of multilingual audio data. It uses a transformer encoder-decoder architecture and achieves near-human accuracy across dozens of languages. Yes, Whisper can run locally on a Mac through projects like whisper.cpp (a C/C++ implementation) and Apple's WhisperKit (which compiles Whisper to Core ML for Apple Silicon). Yaps uses an optimized, Whisper-derived architecture that has been fine-tuned specifically for real-time macOS dictation with 4-bit quantization for minimal memory usage.

Yes, modern speech-to-text can work fully offline with no internet connection required. On-device speech recognition tools like Yaps run the entire transcription pipeline locally on your hardware. The neural network models, language models, and post-processing all execute on your Mac's Apple Silicon chip. There is no "degraded mode" - offline accuracy is identical to online accuracy because the same models run regardless of network connectivity. This means you can dictate on airplanes, in areas without cell service, or in secure facilities with full accuracy.

In 2026, yes - for standard dictation tasks, on-device speech recognition has effectively reached parity with cloud services. Advances in model architecture (particularly Whisper-derived models), quantization techniques like GPTQ, and dedicated ML hardware like Apple Silicon's Neural Engine have closed the gap. Yaps achieves a word error rate within 0.5% of leading cloud providers on standard English dictation. For specialized vocabulary, on-device tools with personalization can actually outperform cloud alternatives.

Apple Silicon's Neural Engine is a dedicated hardware accelerator designed specifically for machine learning inference. It can perform up to 38 trillion operations per second (on the M4 chip), providing more than enough computational power to run multiple speech recognition models simultaneously in real time. When speech models are compiled to Apple's Core ML format, they run on the Neural Engine rather than the CPU or GPU, meaning dictation consumes minimal system resources and does not impact other running applications.

Model quantization is the process of reducing the numerical precision of a neural network's parameters to decrease memory usage and increase inference speed. Yaps uses 4-bit GPTQ quantization, which reduces each model parameter from 32-bit floating-point to 4-bit integer precision - an 8x reduction in model size. A model requiring 1.6GB at full precision fits in approximately 200MB after quantization, with less than 1% accuracy loss on standard benchmarks. This is what makes it possible to run large, accurate speech models on consumer hardware like a MacBook Air.

Speech data is uniquely sensitive - it contains both biometric identifiers (your unique voice characteristics) and the content of your communications (which may include confidential business information, medical records, legal correspondence, or personal thoughts). The track record of cloud providers with voice data includes Google's $68 million settlement for recording private conversations, lawsuits against companies like Fireflies.AI for harvesting biometric voice data without consent, and 276 million healthcare records breached in 2024. On-device processing eliminates these risks entirely by ensuring your audio never leaves your physical device.

Yaps is designed as a privacy-first, offline-first native macOS application. Unlike cloud-dependent tools like Wispr Flow (which sends audio to OpenAI/Meta servers and captures screenshots) or Granola AI (which routes transcriptions through OpenAI/Anthropic), Yaps processes everything locally with zero data transmission. Unlike Electron-based alternatives, Yaps is built natively in Swift for minimal resource usage - under 200MB of memory with instant startup. It includes features like personalized vocabulary learning, smart dictation history, voice activity detection tuned for long-form dictation, and a studio editor for refining transcriptions.

Yaps is actively developing multi-speaker recognition (distinguishing different speakers in meetings), contextual awareness (adapting formatting based on which application you are using), on-device voice cloning (creating a personalized text-to-speech voice from a few minutes of your speech), and real-time local translation (speaking in one language and seeing text in another). All of these features will run entirely on-device, maintaining Yaps's commitment to privacy-first, offline-first architecture.

Yaps is a native macOS application that requires an Apple Silicon Mac (M1 or later). Installation is straightforward - download from yaps.ai, drag to your Applications folder, and start dictating. No account creation is required, no cloud configuration needed, and no internet connection necessary. The app launches in under a second and is ready to transcribe immediately. Hold Fn and speak to begin dictating anywhere on your Mac.