"Any sufficiently advanced technology is indistinguishable from magic." - Arthur C. Clarke

What Got Me Thinking

- 📄 OpenAI's Whisper paper (Robust Speech Recognition via Large-Scale Weak Supervision, 2022) - the original research that made high-quality open-source transcription a reality.

- 🎙️ Georgi Gerganov's whisper.cpp - a C/C++ port of Whisper that proved you do not need Python or a GPU to run state-of-the-art speech recognition on consumer hardware.

- 🔐 The growing concern over cloud voice data - research consistently shows that IT professionals and compliance teams treat voice recordings as among the most sensitive categories of personal data, yet most transcription services still require uploading audio to remote servers.

Every time you send audio to a cloud transcription service, you are handing over more than words. You are handing over tone, cadence, background noise, ambient conversations, and - depending on the service - a permanent recording that lives on someone else's servers. For developers, researchers, lawyers, and anyone handling sensitive material, that is not a trade-off worth making.

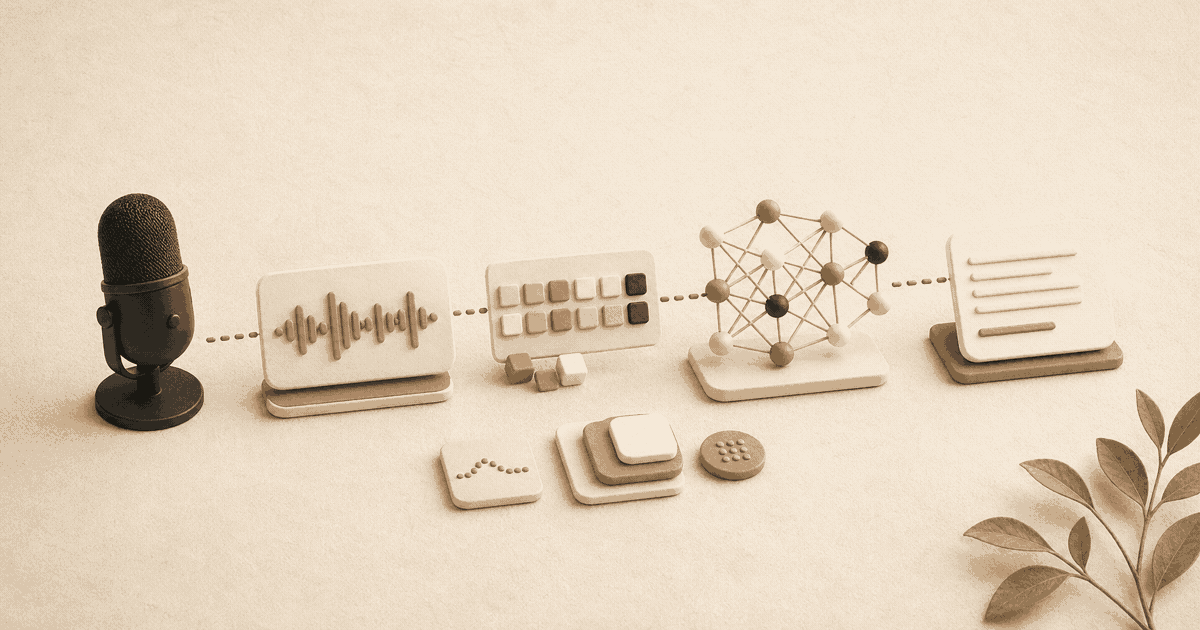

OpenAI's Whisper changed the equation. Released as an open-source model in late 2022, Whisper demonstrated that speech recognition approaching human-level accuracy could run entirely on local hardware. No API keys. No internet connection. No data leaving your machine.

But "open-source" does not mean "easy to set up." Between Python environments, model selection, dependency conflicts, and hardware acceleration, getting Whisper running locally on a Mac still involves a meaningful amount of technical work.

This guide walks you through the entire Whisper AI local transcription setup on Mac - from first terminal command to working transcription. We will cover both the original Python implementation and the faster whisper.cpp alternative, discuss realistic performance expectations on Apple Silicon, and be honest about where the DIY approach falls short.

Why Run Whisper Locally Instead of Using a Cloud API?

The short answer: privacy, cost, and control.

Cloud transcription APIs - including OpenAI's own Whisper API - charge per minute of audio. At scale, those costs add up quickly. More importantly, every audio file you upload is transmitted, processed, and potentially stored on infrastructure you do not control. For anyone working with patient data, legal recordings, proprietary research, or personal voice notes, that is a non-starter.

Running Whisper locally means:

- Your audio never leaves your machine. There is no network request, no data retention policy to read, no third-party subprocessor to worry about.

- No per-minute costs. Once the model is downloaded, transcription is free forever.

- No rate limits. Process as many files as your hardware can handle.

- No internet dependency. Works on planes, in secure facilities, and in areas with poor connectivity.

If you have read our deep dive into how speech recognition works, you already know that modern models like Whisper use transformer architectures trained on hundreds of thousands of hours of multilingual audio. The remarkable thing is that this entire model - training weights and all - can now fit on a MacBook.

Whisper vs Parakeet on Mac

The Whisper assumption deserves a small caveat in 2026. NVIDIA's Parakeet model family - released as open source through the NVIDIA NeMo project - now matches or beats Whisper Large v3 on English benchmarks while running smaller and faster. On Apple Silicon, a Parakeet TDT 0.6B model can transcribe an hour of audio in roughly the time Whisper Large v3 takes for ten minutes. The trade-offs are that Parakeet is currently English-only (Whisper handles 99 languages) and its tooling is less mature than the Whisper ecosystem.

For a private, English-only Whisper push-to-talk Mac setup where you care most about latency, Parakeet is worth a look. For multilingual or non-English work, Whisper is still the right pick. The whisper.cpp / nerd-dictation / MacWhisper-style frontends in this guide all work with Whisper today; Parakeet support is rolling out across the same projects.

What Hardware Do You Need for Local Whisper on Mac?

Any Mac with Apple Silicon (M1, M2, M3, M4, or their Pro/Max/Ultra variants) can run Whisper locally. Intel Macs can technically run it too, but performance will be significantly slower without Neural Engine or GPU acceleration.

Here is a realistic breakdown of what to expect:

| Model Size | Parameters | RAM Needed | Disk Space | Speed on M1 (1 min audio) | Relative Accuracy |

|---|---|---|---|---|---|

| tiny | 39M | ~1 GB | ~75 MB | ~3 seconds | Usable for notes |

| base | 74M | ~1 GB | ~142 MB | ~5 seconds | Good for clear speech |

| small | 244M | ~2 GB | ~466 MB | ~12 seconds | Strong for most uses |

| medium | 769M | ~5 GB | ~1.5 GB | ~30 seconds | Near-professional |

| large-v3 | 1.55B | ~10 GB | ~3 GB | ~55 seconds | Highest accuracy |

These timings are for whisper.cpp with Core ML acceleration. The pure Python implementation will be roughly 2–4x slower depending on your configuration.

For most users, the small or medium model offers the best balance of accuracy and speed. The large model is worth the wait only if you are transcribing accented speech, technical vocabulary, or multilingual content.

How to Install Whisper AI on Mac: The Python Method

This is the original, most documented approach. It uses OpenAI's official Python package.

Step 1: Install Homebrew and Python

If you do not already have Homebrew installed, open Terminal and run:

/bin/bash -c "$(curl -fsSL https://raw.githubusercontent.com/Homebrew/install/HEAD/install.sh)"

Then install Python 3.11 (Whisper works best with 3.10 or 3.11 - newer versions can cause dependency conflicts):

brew install python@3.11

Step 2: Create a Virtual Environment

Never install AI packages into your system Python. Create an isolated environment:

python3.11 -m venv ~/whisper-env

source ~/whisper-env/bin/activate

You will need to run that source command every time you open a new terminal session to use Whisper.

Step 3: Install Whisper and ffmpeg

pip install openai-whisper

brew install ffmpeg

The ffmpeg dependency handles audio format conversion. Whisper accepts most common formats (MP3, WAV, M4A, FLAC), but ffmpeg is required to decode them.

Step 4: Run Your First Transcription

whisper audio.mp3 --model small --language en

This will download the small model on first run (approximately 466 MB), then transcribe your file. Output appears in the terminal and is saved as .txt, .srt, and .vtt files in the current directory.

Step 5: Tune for Your Use Case

Common flags worth knowing:

# Output only plain text (no subtitles)

whisper audio.mp3 --model small --output_format txt

# Specify output directory

whisper audio.mp3 --model small --output_dir ./transcripts

# Force language detection off (faster if you know the language)

whisper audio.mp3 --model small --language en

# Use the large model for maximum accuracy

whisper audio.mp3 --model large-v3

How to Run whisper.cpp for Faster Local Transcription on Mac

The Python implementation is straightforward but not fast. Georgi Gerganov's whisper.cpp is a C/C++ reimplementation that runs significantly faster on Apple Silicon by taking advantage of the Neural Engine and Metal GPU acceleration.

Step 1: Clone and Build

git clone https://github.com/ggerganov/whisper.cpp.git

cd whisper.cpp

make

On Apple Silicon Macs, make will automatically detect and enable Metal support.

Step 2: Download a Model

bash models/download-ggml-model.sh small

This downloads the small model in GGML format (approximately 466 MB). Available sizes: tiny, base, small, medium, large-v3.

Step 3: Convert Your Audio to WAV

whisper.cpp requires 16kHz mono WAV input:

ffmpeg -i audio.mp3 -ar 16000 -ac 1 -c:a pcm_s16le audio.wav

Step 4: Transcribe

./main -m models/ggml-small.bin -f audio.wav

Optional: Enable Core ML Acceleration

For even faster performance on Apple Silicon, you can build with Core ML support:

make clean

WHISPER_COREML=1 make -j

Then generate Core ML models:

pip install ane_transformers openai-whisper coremltools

python models/generate-coreml-model.py --model small

This adds complexity but can improve transcription speed by 30–50% on M-series chips.

Easier to install. Slower execution - roughly 2–4x real-time on Apple Silicon for the medium model. Higher memory usage. Better for batch processing where speed is not critical.

Requires compilation. Significantly faster - often faster than real-time for small/medium models on M-series Macs. Lower memory footprint. Better for frequent or near-real-time use.

Real Limitations of Running Whisper Locally

Here is where honesty matters. Running Whisper locally is genuinely useful, but it is not a polished product experience. After helping developers get this set up, we have seen the same friction points come up repeatedly.

It Is File-Based, Not Real-Time

Out of the box, Whisper processes audio files. It does not listen to your microphone and transcribe in real time as you speak. Building a real-time pipeline requires additional code - capturing audio from the microphone, chunking it into segments, feeding those segments to the model, and stitching the output together. Projects like whisper-live and buzz attempt this, but they add their own complexity and dependencies.

Environment Management Is an Ongoing Cost

Python environments break. Dependencies conflict. A macOS update can invalidate your build. If you are not already comfortable maintaining Python virtual environments, expect to spend time troubleshooting pip install failures, version mismatches, and missing system libraries.

No System-Wide Integration

Whisper runs in a terminal. It does not integrate with your text editor, your email client, or your browser. If you want transcription output to appear where you are actually working, you need to build that integration yourself - clipboard scripts, file watchers, or custom automation.

Model Updates Require Manual Work

When OpenAI releases a new Whisper model (like the jump from large-v2 to large-v3), you need to manually download the new weights, potentially update the Python package or rebuild whisper.cpp, and verify that nothing broke.

No Text-to-Speech

Whisper is speech-to-text only. If you also want text-to-speech capabilities - having your Mac read text aloud to you - that is an entirely separate system to set up and maintain.

How a Polished Alternative Compares to DIY Whisper

If you have read this far and thought "I just want private transcription that works without all this setup," that is a fair reaction. The DIY approach is rewarding if you enjoy the technical work, but it is not for everyone.

Yaps exists precisely because we went through this process ourselves and wanted something better. It is a native companion - built with Tauri and Rust, not Electron - that handles on-device speech-to-text and text-to-speech without any of the setup described above. Yaps is available on macOS today, with Windows and Android support on the way.

Install Python, manage virtual environments, download models manually, build from source, convert audio formats, write integration scripts, maintain everything across OS updates. Works in the terminal only.

Download and open. Hold Fn to dictate anywhere on your system. All processing on-device, no internet required. Under 200MB RAM, starts in under one second. Works across every app. No configuration needed.

The fundamental difference is that Yaps is designed as a system-wide companion that fits into how you already work, not a command-line process that requires you to change your workflow. Hold Fn and start speaking - your words appear wherever your cursor is. Hold Option+Fn and Yaps reads selected text aloud. That is it.

Both approaches keep your audio completely private. The question is whether you want to build and maintain the infrastructure yourself or use something purpose-built. For developers who enjoy tinkering, the DIY route is a worthy project. For everyone else - and for developers who would rather spend their time on their actual work - Yaps handles the complexity for you.

When to Choose DIY Whisper Over a Polished App

The DIY approach makes sense in specific scenarios:

- Batch processing large audio archives. If you have hundreds of hours of recorded audio to transcribe, running Whisper in a script with a loop is efficient and cost-effective.

- Custom model fine-tuning. If you need Whisper to recognize domain-specific vocabulary (medical terms, legal jargon, proprietary product names), fine-tuning the model requires the Python implementation.

- Integration into existing pipelines. If Whisper is one component of a larger automated workflow - say, transcribing podcast audio, then summarizing with an LLM, then publishing - the command-line interface slots in naturally.

- Learning and experimentation. If you want to understand how transformer-based speech recognition works at a practical level, there is no substitute for running the model yourself, inspecting the outputs, and tweaking the parameters.

For daily dictation, voice notes, and real-time transcription as part of your normal workflow, a purpose-built companion like Yaps will save you hours of setup and ongoing maintenance. Our guide to offline dictation covers more about why on-device processing matters for daily use.

If you decide to run Whisper locally for batch processing but want system-wide dictation for daily use, the two approaches are not mutually exclusive. Use Whisper scripts for your audio archives and Yaps for everything else.

Troubleshooting Common Whisper Installation Issues on Mac

"No module named 'whisper'"

You are likely running the wrong Python binary. Confirm you are inside your virtual environment:

source ~/whisper-env/bin/activate

which python # Should point to your venv, not /usr/bin/python3

"ffmpeg not found"

Install it with Homebrew:

brew install ffmpeg

Then verify:

ffmpeg -version

Slow Performance on Apple Silicon

If Whisper is running surprisingly slowly, check two things. First, confirm you are using an ARM-native Python build, not an x86 version running under Rosetta:

python -c "import platform; print(platform.machine())"

# Should print "arm64", not "x86_64"

Second, for whisper.cpp, ensure Metal support is enabled by checking the build output for "Metal" references.

Out of Memory Errors with Large Models

The large-v3 model needs approximately 10 GB of RAM. If your Mac has 8 GB, stick with the small or medium model. There is no workaround for this - the model weights must fit in memory.

Core ML Model Generation Fails

Core ML model generation requires specific versions of coremltools and ane_transformers. If it fails, try pinning versions:

pip install coremltools==7.1 ane_transformers==0.1.4

Performance Benchmarks: What to Realistically Expect

We ran Whisper on a 5-minute English podcast clip across different configurations on an M2 MacBook Air with 16 GB RAM. These numbers are representative, not definitive - your results will vary with audio quality, speaker accents, and background noise.

| Configuration | Model | Time to Transcribe | Real-Time Factor |

|---|---|---|---|

| Python (openai-whisper) | small | 48 seconds | 0.16x |

| Python (openai-whisper) | medium | 2 min 15 sec | 0.45x |

| whisper.cpp | small | 14 seconds | 0.047x |

| whisper.cpp | medium | 38 seconds | 0.127x |

| whisper.cpp + Core ML | small | 9 seconds | 0.03x |

| whisper.cpp + Core ML | medium | 24 seconds | 0.08x |

A real-time factor below 1.0 means the transcription completes faster than the audio duration. whisper.cpp with Core ML on the small model processes audio roughly 33x faster than real-time - fast enough that the bottleneck becomes reading the output, not waiting for it.

These benchmarks are for file-based transcription. Real-time microphone transcription introduces additional latency from audio capture, chunking, and output assembly. Expect end-to-end latency of 1–3 seconds per utterance with a well-configured whisper.cpp setup.

What Privacy Do You Actually Get From Local Whisper?

Running Whisper locally gives you genuine, verifiable privacy. The model runs entirely on your CPU and GPU. No audio data is transmitted anywhere. You can verify this yourself by running Whisper with your network connection disabled - it works identically.

This is a fundamentally different privacy model from cloud services that claim to "delete your data after processing." With local processing, there is no data to delete because it never left your device in the first place.

For professionals working with sensitive content - medical dictation, legal recordings, confidential business discussions - this is not a nice-to-have. It is a requirement. Our coverage of voice data privacy explores why on-device processing is the only architecture that truly satisfies regulatory frameworks like HIPAA and attorney-client privilege.

Final Thoughts

Running Whisper AI locally on your Mac is a genuinely practical project. The models are capable, the hardware is sufficient, and the privacy benefits are real. If you enjoy the command line and want maximum control over your transcription pipeline, the setup described in this guide will serve you well.

But technology is at its best when it disappears. The hours spent configuring Python environments, downloading models, converting audio formats, and writing integration scripts are hours not spent on the work you actually care about. That is why we built Yaps - to give you the same on-device privacy without any of the setup. Hold a key, speak, and your words appear. No terminal required.

Whether you choose the DIY path or the ready-made one, the important thing is that your voice stays yours. Every word, processed on your machine, under your control.

A voice keyboard that keeps your voice on your phone.

Install Yaps on Android for offline dictation, a familiar full-size keyboard, and no screen capture. Scan the QR on desktop, or tap the Play badge on mobile.

Frequently Asked Questions

Can Whisper AI run completely offline on a Mac?

Yes. Once you have downloaded the model weights (which range from 75 MB for the tiny model to approximately 3 GB for large-v3), Whisper runs entirely offline. No internet connection is needed for transcription. The model, the inference engine, and all processing happen locally on your Mac hardware.

Which Whisper model size should I use on my Mac?

For most users, the small model (244M parameters, ~466 MB) offers the best balance of speed and accuracy. It handles clear English speech well and runs quickly on Apple Silicon. If you regularly transcribe accented speech, technical vocabulary, or non-English languages, step up to the medium model. The large-v3 model delivers the highest accuracy but requires 10 GB of RAM and is significantly slower.

Is whisper.cpp faster than the Python version of Whisper?

Yes, significantly. In our testing on an M2 MacBook Air, whisper.cpp with Core ML acceleration processed audio roughly 2–4x faster than the Python implementation for the same model size. The whisper.cpp project is specifically designed to take advantage of Apple Silicon hardware, including the Neural Engine and Metal GPU acceleration.

Does running Whisper locally on Mac use a lot of battery?

Transcription is computationally intensive and will draw noticeable power during processing. For the small model on Apple Silicon, expect similar battery impact to running a video call. Batch transcribing hours of audio will drain your battery faster than normal use. If battery life is a concern, plug in before processing large audio files.

Can Whisper transcribe in real time from my microphone?

Not out of the box. The standard Whisper implementation is designed for file-based transcription. Real-time microphone transcription requires additional software to capture audio, segment it into chunks, feed those chunks to the model, and assemble the output. Several open-source projects add this capability, but they introduce additional complexity. Yaps provides real-time, system-wide dictation natively without any additional setup.

How accurate is local Whisper compared to cloud transcription services?

The accuracy is identical to OpenAI's Whisper API because it is the same model. The large-v3 model has been independently benchmarked at word error rates competitive with commercial services across multiple languages. The difference is purely in where the computation happens - on your device versus on a remote server.

Do I need a GPU to run Whisper on Mac?

No dedicated GPU is required. On Apple Silicon Macs (M1 and later), Whisper leverages the integrated GPU and Neural Engine through Metal and Core ML. Intel Macs can run Whisper on CPU alone, though performance will be notably slower. There is no need for an external GPU or eGPU setup.

Can I fine-tune Whisper for specialized vocabulary?

Yes, using the Python implementation. Fine-tuning requires a dataset of audio paired with correct transcriptions in your domain. This is a more advanced workflow involving PyTorch and typically takes several hours on consumer hardware. Fine-tuning is worthwhile if you regularly transcribe content with domain-specific terminology - medical terms, legal jargon, or proprietary product names - that the base model struggles with.

How does local Whisper transcription compare to Apple's built-in Dictation?

Apple's built-in Dictation on macOS now supports on-device processing, which is a strong privacy baseline. However, it is designed for real-time dictation of short passages, not transcription of audio files. Whisper supports 99 languages (compared to Apple Dictation's more limited set), handles longer audio, and produces timestamped subtitle files. The trade-off is that Whisper requires manual setup and does not integrate system-wide without additional work. For a detailed comparison of dictation options on Mac, see our complete Mac dictation guide.

Is my transcription data truly private when using local Whisper?

Yes, verifiably so. You can confirm this by disconnecting from the internet and running a transcription - it works identically. No audio is transmitted, no telemetry is sent, and no logs are uploaded. Your data exists only on your local filesystem. This is the same privacy architecture that Yaps uses, with the difference that Yaps packages it into a native companion that requires no technical setup.